wankerness

Well-Known Member

That's the optimistic view. I suspect companies that know what they're doing and aren't beholden to shareholders will do what you say. But I expect plenty more to go all-in on it and face consequences down the road somewhere.Maybe some, but I can vouch that at least some companies are being very cautious around AI for a whole host of reasons. A lot of companies feel like their value is in the team they've cultivated, not so much lines of code or some other arbitrary measure of productivity. Some companies recognize that using AI could put you in hot water for IP and copyright given that you can only train an AI to "be a programmer" by feeding it someone else's code. I think smart companies will be evaluating for themselves what they can and can't get out of AI before jumping to any conclusions about who or what it will replace.

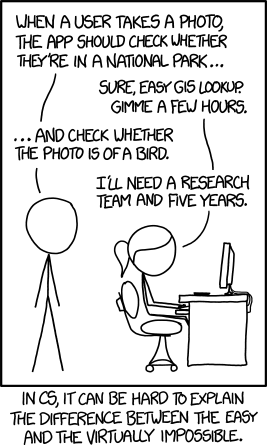

I certainly think some will try to replace their programmers with AI, and there will be some successes, but I expect even more failures. Because, as Demiurge said:

And I don't mean that as a jab to say that anyone who believes AI can replace a programmer must be a bad programmer, but not all software jobs are equivalent. It's not like code generation or automation are new concepts in the world of software. Automated testing and CI etc. didn't replace QA, for example.

I think AI is kind of like Blockchain in that it's currently a stupid piece of technology that is basically the technological equivalent to a buzzword which everyone's falling all over themselves to loudly say they have in their product, and in a few months that fad will be over. I think it's far more dangerous than Blockchain in that it actually is causing bad management to try and remove real workers, and is already costing thousands of people their jobs, and that it some uses of it that are actually effective will continue being used. Especially with idiot execs that make AI-generated posters for their movies, or get it to write copy, or whatever. Those are considered "good enough" by the vast majority of people that look at them, so I'm afraid it's here to stay unless there are some stunning pro-labor, anti-exec supreme court decisions (yeah right, with the conservative wackos in charge) that basically make all of AI-generated art/writing trained on real art/writing a copyright infringement.

I saw a news article yesterday about how ChatGPT had developed a tool for teachers to detect with 99.9% accuracy whether their student had used chatgpt, but they'd been sitting on it for a year cause "30% of chatgpt users are opposed to being able to be caught" basically. So, there's plenty of "customers" out there that are the problem, too.